TL;DR: Continuous testing in CI/CD helps engineering teams release software faster without sacrificing reliability. Instead of treating testing as a final stage before deployment, modern pipelines validate code continuously through unit tests, integration tests, smoke tests, release gates, and production monitoring. By combining automated validation, observability, progressive delivery, and rollback strategies, teams can detect issues earlier, reduce deployment risk, and ship updates confidently at scale.

Modern software teams are expected to release features faster than ever before. Customers expect continuous improvements, businesses demand shorter delivery cycles, and competition leaves very little room for slow release processes. In this environment, releasing software once every few weeks is no longer considered fast. Many engineering teams now deploy multiple times a day.

This shift has fundamentally changed how quality assurance works. Traditional testing strategies were designed for slower release cycles where teams had time for long regression phases before deployment. In a CI/CD environment, that model quickly becomes a bottleneck. If testing cannot keep pace with development velocity, deployments slow down, feedback loops become longer, and engineering teams lose confidence in the release process.

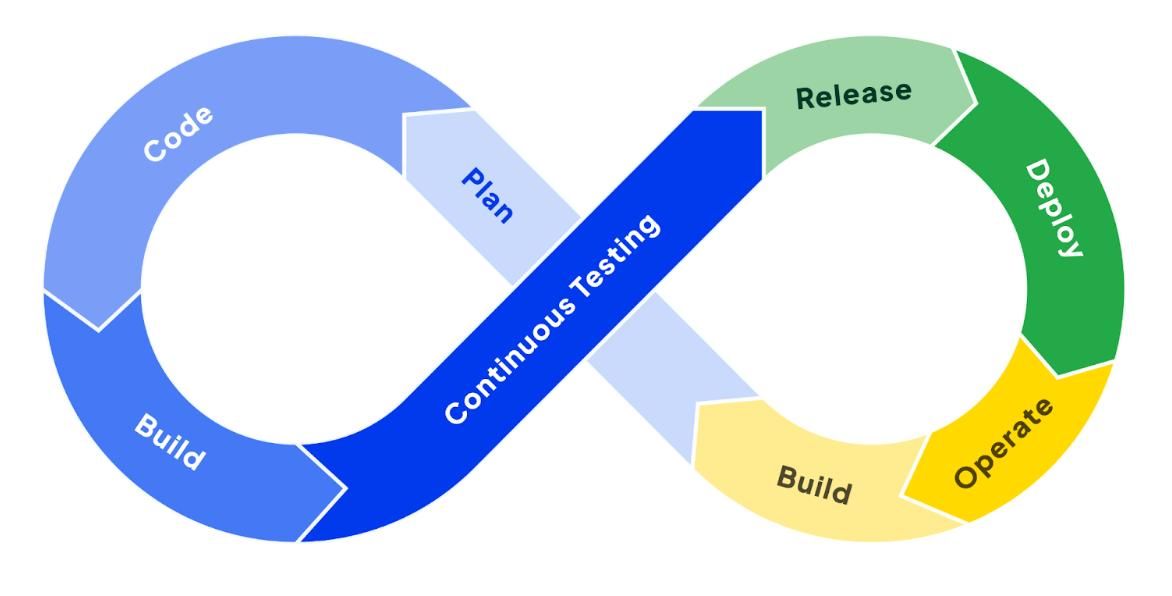

Continuous testing solves this problem by embedding testing into every stage of the software delivery pipeline. Instead of treating testing as a final checkpoint before release, modern teams validate quality continuously from development to production. The objective is not simply to catch bugs. The objective is to create enough confidence that teams can ship rapidly without introducing instability into production systems.

“The faster the release cycle becomes, the more important rapid feedback becomes.”

Continuous testing is what allows modern engineering teams to maintain both speed and reliability at the same time.

Understanding Continuous Testing in CI/CD

What Continuous Testing Actually Means

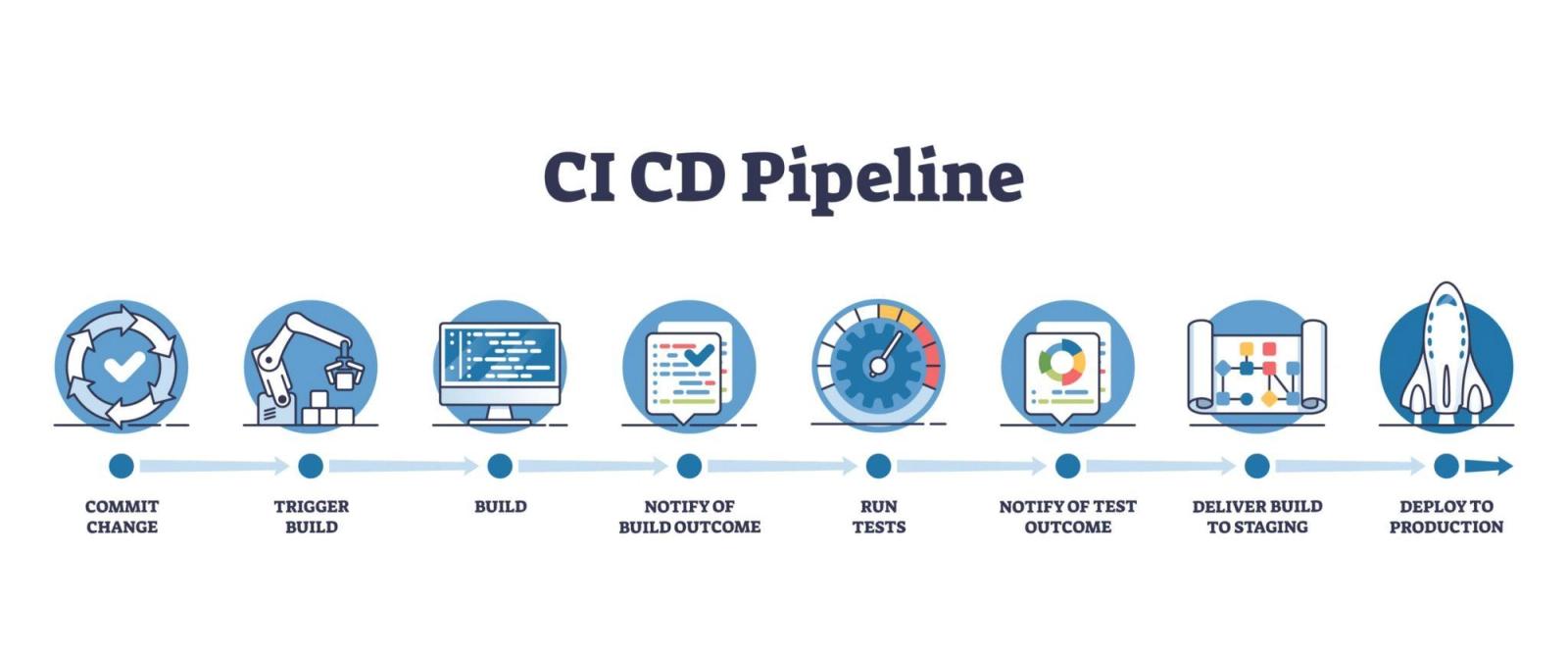

Continuous testing is the practice of executing automated validation throughout the entire software delivery lifecycle. Every code commit, pull request, build, deployment, and production release becomes an opportunity to validate application behavior.

In traditional workflows, testing usually happened after development was complete. QA teams would manually verify features near the end of the release cycle. That approach worked when deployments happened monthly or quarterly. It becomes unsustainable when applications are updated continuously throughout the day.

Continuous testing shifts quality validation earlier and distributes it across multiple layers of the pipeline. Unit tests validate business logic immediately after code changes. Integration tests confirm services communicate correctly. End-to-end tests verify user workflows, while production monitoring validates real-world application behavior after deployment.

The entire process is designed around rapid feedback. Developers should know within minutes whether a change introduced instability. Faster feedback reduces debugging complexity, improves developer productivity, and prevents small issues from becoming expensive production incidents later.

Why Testing Must Match Deployment Velocity

As release frequency increases, slow testing becomes one of the biggest obstacles to delivery speed. Pipelines that require hours to validate changes eventually create frustration across engineering teams. Developers begin bypassing checks, delaying merges, or manually approving deployments simply to maintain release velocity.

Continuous delivery only works when testing systems are optimized for speed and reliability. That does not mean removing test coverage. It means distributing validation intelligently across the pipeline so every stage provides the right level of confidence without unnecessary delay.

The goal is not to run every possible test before every deployment. The goal is to identify meaningful risks as early as possible while allowing low-risk changes to move quickly through the system.

Real-World Example: Amazon’s High Deployment Frequency

Amazon is widely recognized for its large-scale continuous deployment practices. Engineering teams at Amazon deploy code changes frequently throughout the day using highly automated testing and deployment pipelines.

This level of deployment speed is only possible because testing, validation, and monitoring are deeply integrated into the delivery pipeline. Automated testing allows teams to detect failures quickly while reducing the risks associated with frequent releases.

The following table highlights how testing strategies typically evolve as deployment frequency increases:

| Deployment Frequency | Traditional Testing Approach | Continuous Testing Approach |

|---|---|---|

| Monthly releases | Large manual QA cycles | Automated validation with selective manual checks |

| Weekly releases | Scheduled regression testing | Layered automated testing |

| Daily releases | Partial automation with bottlenecks | Fully integrated CI/CD testing |

| Multiple releases per day | Unsustainable traditional QA | Continuous monitoring and automated validation |

Teams that successfully adopt CI/CD understand that testing is no longer a separate activity from delivery. Testing becomes part of delivery itself.

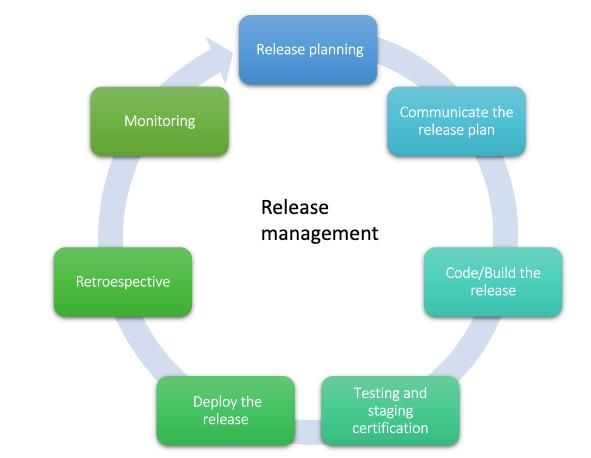

Where Testing Fits Inside the CI/CD Pipeline

Testing During Pull Requests and Pre-Merge Validation

The first layer of continuous testing usually begins before code is merged into the main branch. At this stage, the objective is to prevent unstable code from entering shared environments where it can affect other developers or deployment pipelines.

Pre-merge validation typically focuses on fast and highly reliable checks. These validations often include unit tests, static code analysis, linting, dependency scans, and lightweight integration testing. The emphasis here is speed because developers need immediate feedback during code review.

If pre-merge pipelines take too long, developer productivity drops significantly. Engineers spend more time waiting for validation than writing code. This is why mature CI/CD systems prioritize fast feedback loops during the early stages of development.

Strong pre-merge testing also improves collaboration across engineering teams. Developers gain confidence that new contributions meet baseline quality standards before reaching production pipelines.

Integration Testing During Build Stages

Once code is merged, pipelines move into deeper validation stages where the system is tested as a connected application rather than isolated components.

Integration testing validates communication between services, APIs, databases, caching systems, queues, and infrastructure dependencies. These tests are naturally slower than unit tests because they involve real application interactions rather than isolated functions.

This stage becomes especially important in distributed architectures and microservices environments where small failures in service communication can create major production incidents. Many CI/CD pipelines also execute container validation and infrastructure compatibility checks during this stage.

Unlike unit tests, integration tests focus less on implementation details and more on system behavior. Their purpose is to confirm that independently developed components function correctly together.

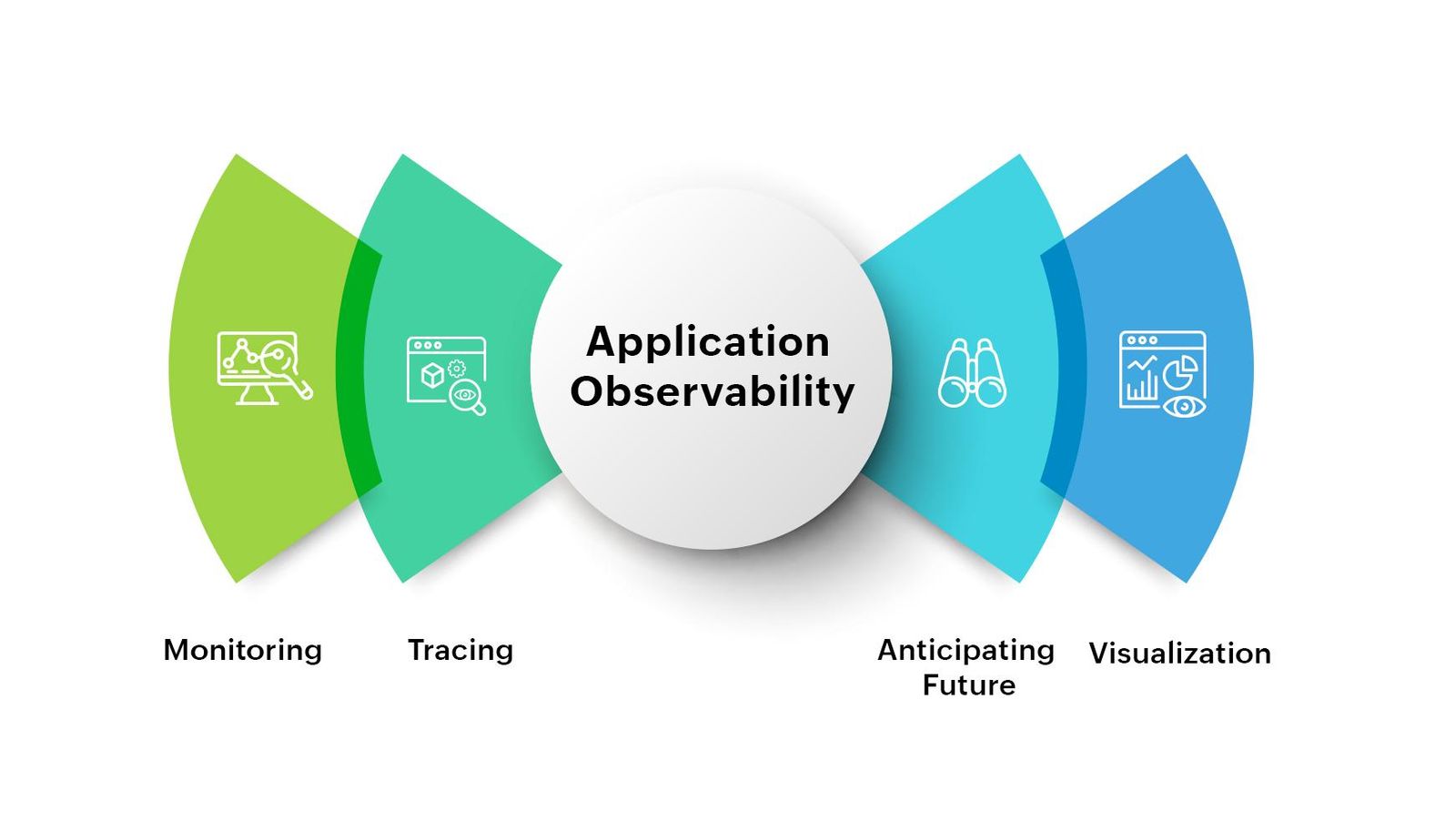

Production Testing and Post-Deployment Validation

Modern engineering teams increasingly treat production as an active validation environment rather than the final destination after testing is complete.

Post-deployment testing validates application health immediately after release. Smoke tests confirm critical workflows remain functional, while monitoring systems track latency, error rates, infrastructure health, and customer experience metrics.

This approach acknowledges an important reality of modern software delivery. Some issues only appear under real production conditions. Traffic patterns, regional infrastructure differences, third-party dependencies, and scaling behavior are difficult to replicate accurately in staging environments.

Instead of relying entirely on pre-production validation, modern CI/CD systems focus on rapid detection and containment of failures after deployment.

Pre-Merge vs Post-Deploy Testing

What Should Be Tested Before Merge

Pre-merge testing should focus on high-confidence validation that prevents immediate instability from entering the shared codebase. Critical business logic, authentication systems, API contracts, and core workflows should usually be validated before deployment pipelines continue.

The challenge is balancing confidence with speed. Running exhaustive regression suites before every merge quickly slows development velocity. Most mature engineering teams instead focus on identifying the smallest set of highly reliable tests capable of catching meaningful failures early.

Effective pre-merge testing usually prioritizes reliability over breadth. Small but stable test suites provide significantly more value than large pipelines filled with flaky or redundant validations.

What Is Better Tested After Deployment

Certain validations become more effective after deployment because they depend heavily on real infrastructure and production conditions.

Performance testing, observability validation, geographic routing behavior, CDN interactions, scaling characteristics, and third-party integrations are often more accurate in production environments than staging systems. Progressive delivery strategies allow teams to test these scenarios safely without exposing all users to potential instability.

Canary deployments, blue-green deployments, and feature flags have dramatically changed how post-deployment testing works. Teams can now release features gradually, validate behavior using real traffic, and roll back changes instantly if problems appear.

This reduces deployment risk while maintaining rapid release velocity.

Balancing Speed and Confidence

One of the most common mistakes in CI/CD adoption is attempting to maximize testing at every stage. While that sounds safer in theory, it usually creates pipelines that are too slow to support continuous delivery effectively.

Continuous testing is fundamentally about optimization. Fast tests run constantly. Broader validation runs selectively. Monitoring continues after deployment. Every layer contributes confidence without unnecessarily slowing the pipeline.

The following table demonstrates how testing depth is commonly distributed across CI/CD systems:

| Pipeline Stage | Primary Goal | Typical Test Types |

|---|---|---|

| Pre-merge | Fast developer feedback | Unit tests, linting, static analysis |

| Build stage | System validation | Integration tests, API testing |

| Pre-deployment | Release confidence | End-to-end workflows |

| Post-deployment | Production verification | Smoke tests, monitoring, canary analysis |

Successful CI/CD pipelines are designed around risk management rather than maximum test volume.

Smoke Tests vs Full Regression Suites

The Role of Smoke Testing

Smoke tests exist to answer one important question quickly: “Is the application fundamentally operational?”

These tests focus on validating the most critical workflows in the system. User authentication, payment processing, API availability, navigation flows, and database connectivity are common examples.

Because smoke tests are intentionally lightweight, they execute quickly and provide immediate feedback after deployments. This makes them highly valuable in fast-moving CI/CD pipelines where rapid validation is essential.

Smoke testing is especially important in production environments because it acts as the first layer of defense after release.

Why Full Regression Suites Still Matter

Although smoke tests provide speed, they cannot validate every possible interaction within a complex application. Full regression suites remain important for identifying edge cases, workflow conflicts, and unexpected side effects introduced by new changes.

Regression testing becomes even more critical in enterprise systems, legacy applications, and highly regulated industries where software behavior must remain predictable across large interconnected workflows.

The challenge is scalability. As applications grow, regression suites often become increasingly slow and expensive to maintain. Many engineering teams eventually reach a point where running full regression validation before every deployment becomes impractical.

To solve this, mature organizations often separate regression testing into scheduled or risk-triggered execution rather than using it as a blocking requirement for every release.

Building a Sustainable Test Pyramid

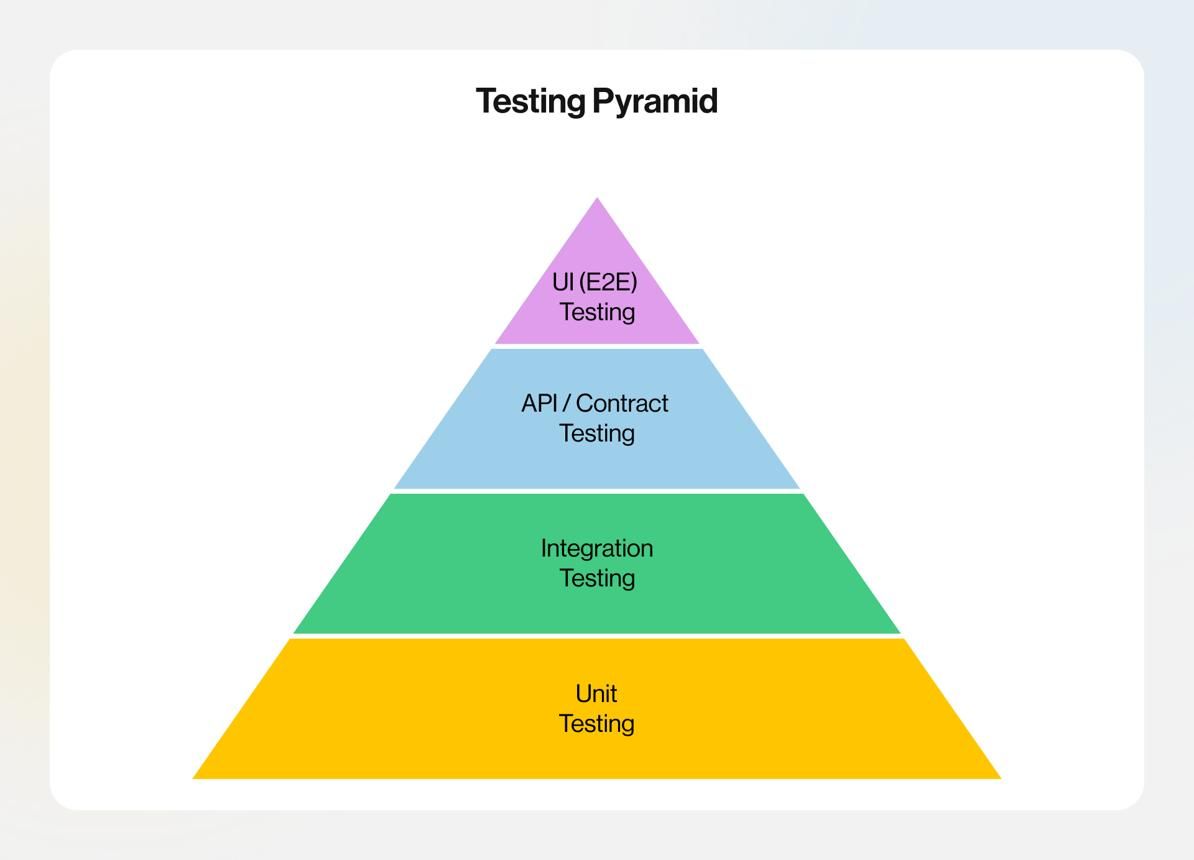

One of the most effective ways to scale continuous testing is by structuring validation around the testing pyramid.

At the base of the pyramid are fast and reliable unit tests. Integration tests sit above them and validate service interactions. End-to-end tests occupy the top layer and focus on complete business workflows.

The testing pyramid encourages teams to maximize fast automated validation while minimizing dependency on slow and brittle UI testing.

| Test Type | Speed | Reliability | Maintenance Cost |

|---|---|---|---|

| Unit Tests | Very fast | High | Low |

| Integration Tests | Moderate | Medium to high | Medium |

| End-to-End Tests | Slow | Lower | High |

Teams that ignore this balance often end up with pipelines dominated by fragile end-to-end automation that slows releases significantly.

Building Effective Release Gates

Understanding Release Gates

Release gates are automated checkpoints that determine whether a deployment can safely continue through the pipeline. These gates help engineering teams enforce quality standards consistently without relying on manual approvals alone.

A release gate may stop deployment if automated tests fail, security vulnerabilities are detected, performance thresholds degrade, or infrastructure health checks indicate instability.

The purpose of release gates is not to slow deployments. Their purpose is to prevent high-risk changes from reaching customers without validation.