Mobile app analytics is often treated as a solved layer in the product stack. Teams integrate SDKs, define event schemas, connect dashboards, and assume they now have visibility into user behavior. On the surface, everything looks mature-hundreds of tracked events, real-time dashboards, and structured reports flowing across teams.

Yet a consistent pattern emerges across mobile products. Users install the app, open it once or twice, and then disappear. Retention stagnates, engagement feels inconsistent, and teams struggle to explain why this is happening. The issue is not a lack of data. It is a deeper misalignment between what is being measured and what actually reflects user progress.

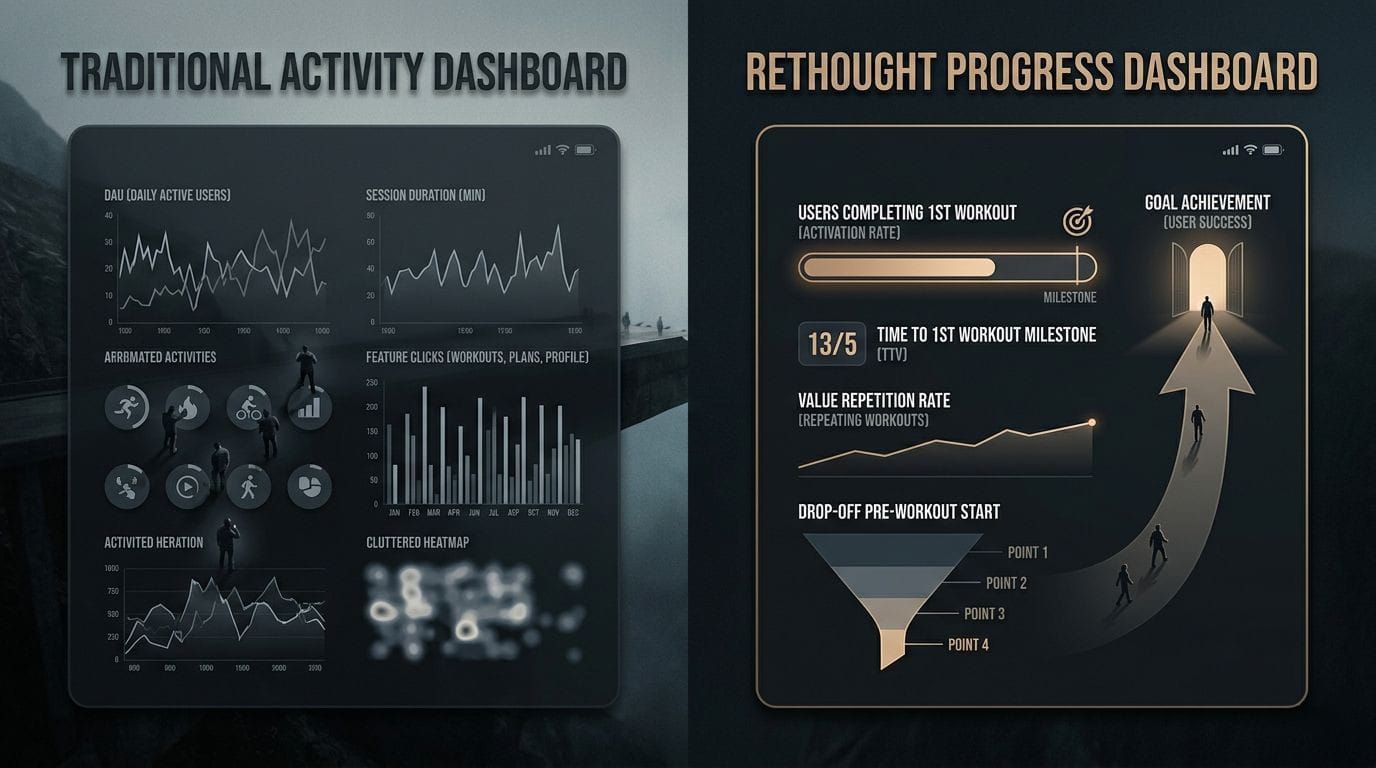

Most mobile analytics systems are designed to capture activity, but product success depends on understanding outcomes.

The Core Misalignment: Activity vs Progress

At the center of ineffective analytics is a subtle but critical confusion. Teams believe they are measuring user behavior, but in reality, they are primarily measuring user activity. This distinction is not semantic-it directly impacts how decisions are made.

Activity-based measurement focuses on observable actions. It captures what users click, how often they open the app, and how long they stay. Progress-based measurement, on the other hand, evaluates whether users are moving toward a meaningful outcome. It asks whether the product is actually working for them.

This is where most analytics systems fall short. They provide high visibility into movement within the app, but very little clarity on whether that movement translates into value. As a result, teams optimize for engagement patterns without fully understanding if those patterns represent success or friction.

What Teams Typically Measure (And Why It Feels Sufficient)

Most mobile analytics implementations follow a familiar structure. They rely on standardized metrics that are easy to track, widely accepted, and supported by default in analytics tools. These metrics create a sense of completeness because they are quantitative, scalable, and comparable across products.

Common Mobile Analytics Metrics

| Metric | What It Captures | Implicit Assumption |

|---|---|---|

| Installs | Number of app downloads | Growth equals product adoption |

| DAU / MAU | Frequency of app usage | Usage reflects engagement quality |

| Session Length | Time spent per session | Longer sessions indicate satisfaction |

| Retention Rate | Users returning over time | Returning users are finding value |

| Event Tracking | Feature-level interactions | More interaction equals better usage |

While these metrics are useful for monitoring surface-level trends, they become problematic when treated as indicators of product success. Each of them relies on an assumption that does not always hold true in practice.

For instance, an increase in session length might suggest deeper engagement, but it could also indicate confusion or inefficient navigation. Similarly, a high number of sessions might reflect repeated attempts to complete a task rather than successful usage. Without understanding the context behind these metrics, teams risk drawing incorrect conclusions.

Where Traditional Metrics Break Down

The limitation of traditional metrics is not that they are incorrect, but that they are incomplete. They act as proxies for value without directly measuring it. This creates a layer of ambiguity that becomes dangerous when used for decision-making.

Installs, for example, are often celebrated as a growth signal. However, they only represent acquisition efficiency, not product adoption. A user downloading the app has not yet experienced any meaningful outcome. Treating installs as success conflates marketing performance with product performance.

Similarly, retention is often considered a north-star metric. While it indicates that users are returning, it does not explain what they are doing when they return. Without connecting retention to meaningful actions, it becomes a lagging indicator rather than a diagnostic one.

When metrics are used as substitutes for value instead of reflections of it, they create confidence without clarity.

The Missing Layer: Defining and Measuring Value

To move beyond surface-level analytics, teams need to explicitly define what value means within their product. Value is not a feature or a screen. It is the outcome a user achieves that justifies their continued use of the app.

In a food delivery app, value is not browsing menus but successfully placing an order. In a fitness app, value is not opening the app but completing a workout. In a finance app, value is not viewing a dashboard but gaining clarity about spending or saving.

Until this outcome is clearly defined, analytics remains disconnected from product reality. Events get tracked, dashboards get populated, but none of it answers the fundamental question: is the product working for the user?

The First Moment of Value (Activation)

One of the most critical concepts in value-driven analytics is the first moment of value, often referred to as activation. This is the point at which a user experiences the core benefit of the product for the first time.

It is important to distinguish this from onboarding completion. Completing onboarding simply means the user followed a sequence of steps. Activation means the user achieved something meaningful.

Examples of Activation Across App Categories

| App Category | First Moment of Value Example |

|---|---|

| Food Delivery | First successful order placed |

| Fitness App | First workout completed |

| Social App | First meaningful interaction (comment or reply) |

| Productivity App | First task completed |

| Finance App | First expense tracked or categorized |

Activation serves as the bridge between acquisition and retention. If users do not reach this point, retention metrics lose their significance because there was no value to retain in the first place.

Users do not return because they opened the app. They return because something worked.

Metrics That Actually Reflect Product Performance

Once value is defined, analytics can shift toward measuring how efficiently users reach and repeat that value. This introduces a set of metrics that are fundamentally different from traditional activity-based ones.