Modern apps are instrumented end to end, capturing everything from installs and sessions to micro-interactions across screens. Dashboards are always available, always updating, and always full. On the surface, it feels like complete visibility into how users behave.

Yet decisions remain unclear. Features are shipped without confidence, retention fluctuates without explanation, and teams often disagree on what the data actually means. This is where the contradiction appears. The issue is no longer about having data. It is about the inability to turn that data into clear, confident decisions.

The Illusion of Visibility in Mobile Dashboards

Dashboards are built to make data visible, but visibility often creates a false sense of understanding. When numbers are neatly organized into charts and trends, it feels like the system is under control. Teams assume that if something is measurable, it must also be explainable.

In practice, dashboards mostly capture outcomes. They show that something happened, but not the conditions that led to it. A drop in conversion or a spike in engagement appears as a clean signal, but the underlying behavior remains hidden. This gap between observation and explanation is where most product confusion begins.

“What you see in dashboards is behavior reduced to numbers. What you need for decisions is behavior in context.”

Dashboard Overload: When Measurement Scales Faster Than Understanding

As mobile products evolve, tracking expands faster than interpretation. New features introduce new events, experiments add more metrics, and each team builds its own reporting layer. Over time, dashboards become dense collections of signals with no clear structure.

The core issue is not the presence of too much data, but the absence of prioritization. When everything is measured, nothing stands out as truly important. Teams move from one chart to another, searching for clarity, but end up with fragmented understanding instead.

| Layer of Growth | What Increases | What Breaks |

|---|---|---|

| Product complexity | Features and flows | Clarity of user journey |

| Analytics coverage | Events and metrics | Signal prioritization |

| Team involvement | Stakeholders and dashboards | Shared understanding |

This imbalance makes dashboards harder to use precisely when products need them the most.

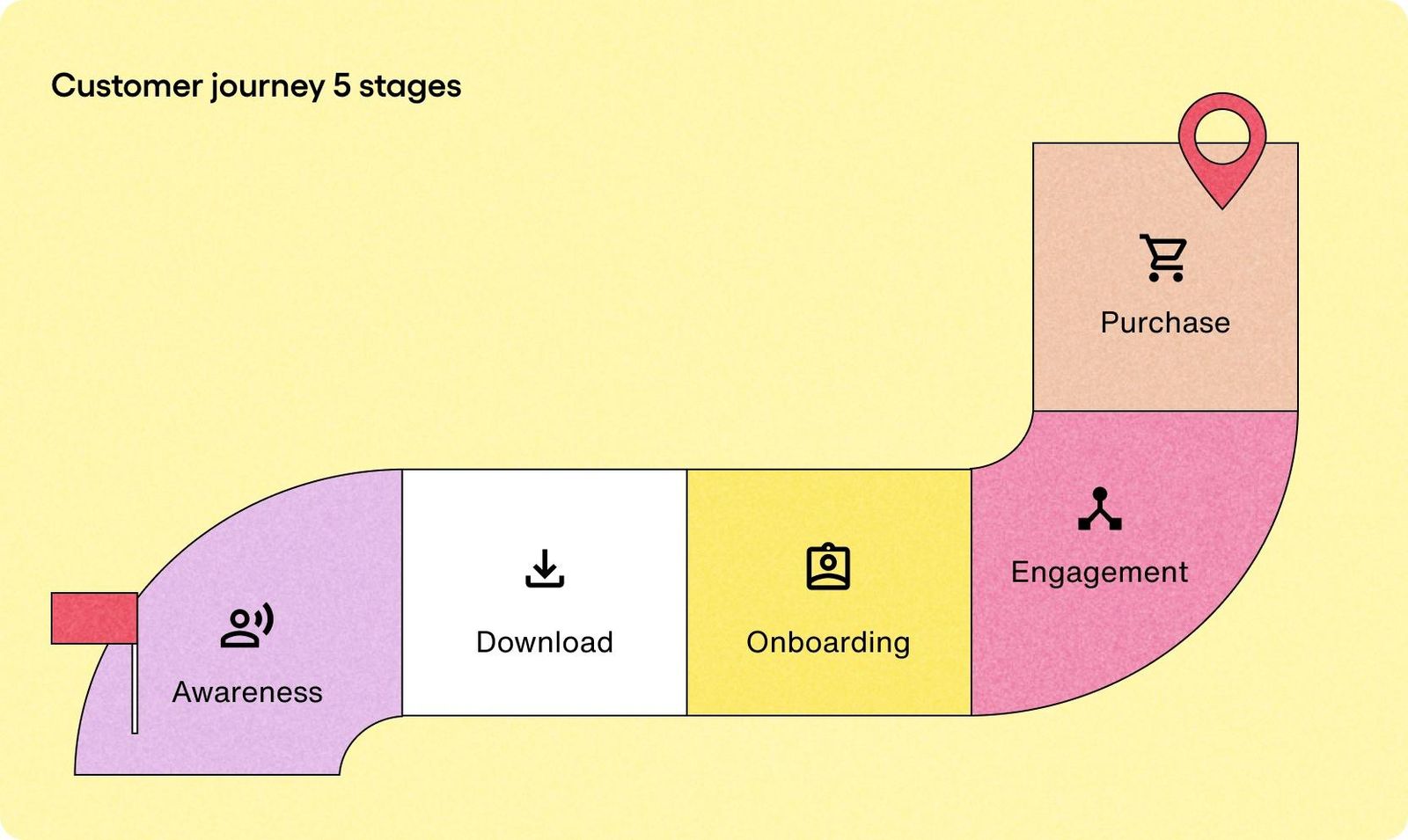

Why KPIs Don’t Tell You What Users Actually Experience

Key performance indicators are essential for alignment. They help teams track progress and communicate performance at a high level. However, they are fundamentally limited when it comes to decision making.

KPIs compress complex behavior into single numbers. A retention rate reflects many different user journeys combined into one percentage. A conversion rate hides multiple friction points behind a single outcome. These metrics are useful summaries, but they are poor diagnostic tools.

When teams rely too heavily on KPIs, they end up reacting to symptoms rather than understanding causes. The metric signals that something is wrong, but it does not reveal where or why the experience is breaking.

The Structural Gap Between Activity Data and Real Behavior

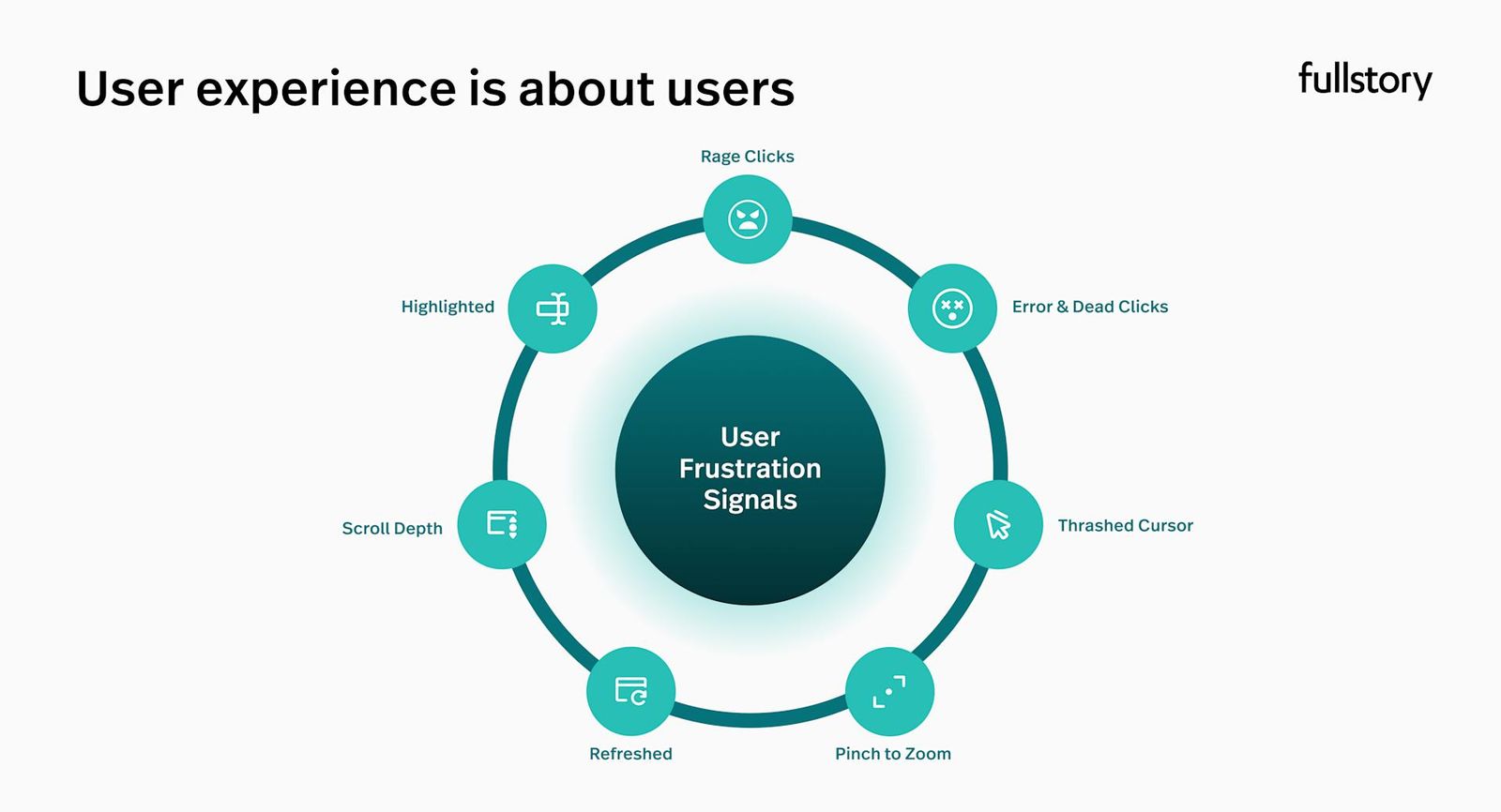

Most mobile analytics systems are built around events. They assume that behavior can be reconstructed by stitching together discrete actions such as clicks, screen views, or completions. This approach works well for counting activity, but it struggles to capture experience.

Real user behavior is continuous and often ambiguous. Between two tracked events, users may hesitate, get confused, retry actions, or abandon their intent. These moments rarely appear in dashboards, yet they define whether a user continues or leaves.

“Events show what users did. Experience explains why they did it.”

This structural gap leads to a simplified version of reality where behavior looks more intentional and linear than it actually is.

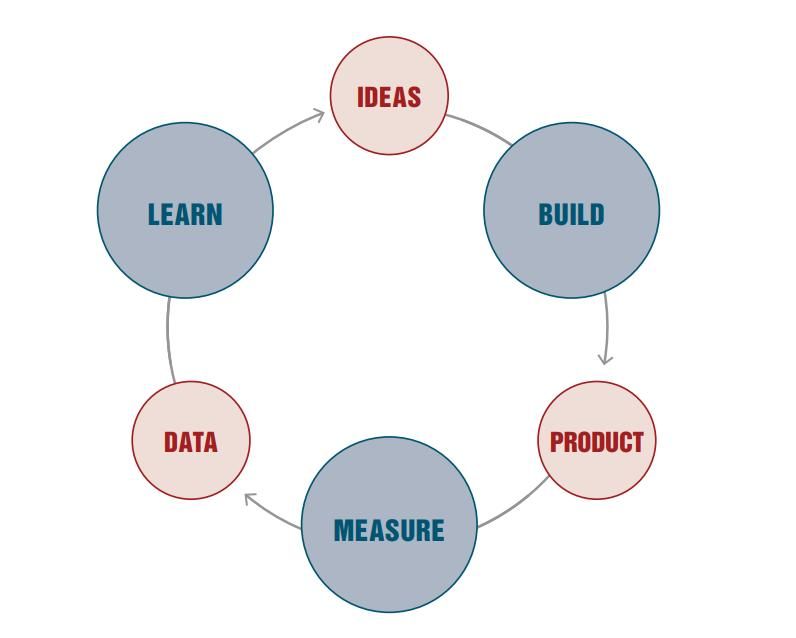

Why Teams Look at Data But Don’t Act

In most organizations, dashboards are regularly reviewed. Teams gather around metrics, analyze trends, and discuss performance. Despite this, many of these discussions fail to produce concrete actions.

The problem lies in the missing transition from observation to decision. Dashboards highlight changes but do not guide interpretation. Without a clear process to move from data to hypothesis, teams remain in a loop of speculation.

A typical pattern looks like this:

- A metric changes

- Multiple explanations are suggested

- No single explanation is validated

- No clear action is taken

This is not a failure of effort. It is a failure of structure.

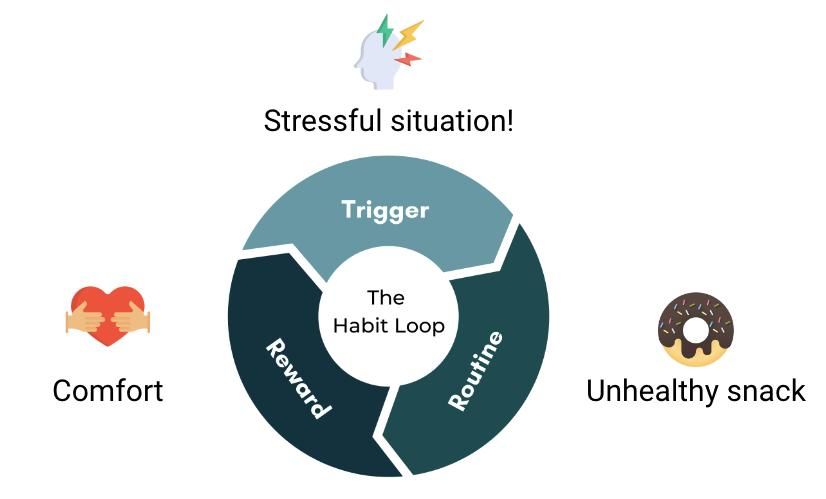

The Comfort Trap: When Monitoring Replaces Thinking

Dashboards create a sense of activity. Checking metrics, preparing reports, and reviewing trends feel like productive work. Teams stay engaged with data, which creates the impression of progress.

However, this activity often replaces deeper thinking. Instead of investigating user behavior, teams rely on surface-level interpretations. Instead of challenging assumptions, they stay within familiar metrics.

Over time, this creates a culture where being informed is mistaken for being effective. The team is busy with data, but not necessarily improving the product.