TL;DR: "It works on my device" isn't a reliability statement; it's a sampling error. Real mobile reliability is a whole different game: your app has to behave consistently across thousands of device configurations, sketchy network connections, and backend states you never even dreamed of testing. So what can you do? This article covers the four axes of mobile reliability chaos, how to define what "reliable" actually means with SLAs and error budgets, and what high-reliability teams do structurally distinct from everyone else.

Every mobile team has said it. "We tested this. It works."

And they are right - on that device, on that OS version, on that Wi-Fi connection, at that time of day, with that backend latency. It works.

The problem is that your users are not on your device. They are on a 4-year-old Redmi with 2GB RAM, on 3G at a metro station, with a backend that just started timing out for a specific user segment in Karnataka. And your app, which works perfectly on your MacBook-connected Pixel Pro, is silently failing for 8% of your actual user base.

This is the reliability gap. It is not a testing gap. It is a systems-thinking gap.

App crashes are the visible face of reliability failure. But crashes are only what happens when failure is loud. Most reliability failures are quiet. The app doesn't crash - it just doesn't work. A screen hangs. A request times out with no feedback. Data loads from cache that's 24 hours stale. The user concludes the app is broken, opens a competitor, and never files a bug report.

Reliability engineering for mobile is the discipline of making consistency deliberate. Not "it usually works." Consistency across chaos.

What "It Works on My Device" Actually Means (and Why It's Dangerous)

When a developer or QA engineer says "it works on my device," they are making an implicit claim that is technically true and operationally meaningless.

Here is what that statement actually contains:

- Tested on 1–3 specific devices

- Tested on a specific OS version (usually the latest)

- Tested on a stable Wi-Fi connection

- Tested when the backend was healthy

- Tested in a clean app state, not after 3 days of background sessions and low memory

Now here is what your real user base looks like, based on Android distribution data from Android Studio's distribution dashboard:

| OS Version | Approximate Active Share |

|---|---|

| Android 14 | ~30% |

| Android 13 | ~26% |

| Android 12 | ~16% |

| Android 11 | ~12% |

| Android 10 and below | ~16% |

That last row? It's the one teams routinely ignore. And it's the exact spot where your reliability completely collapses.

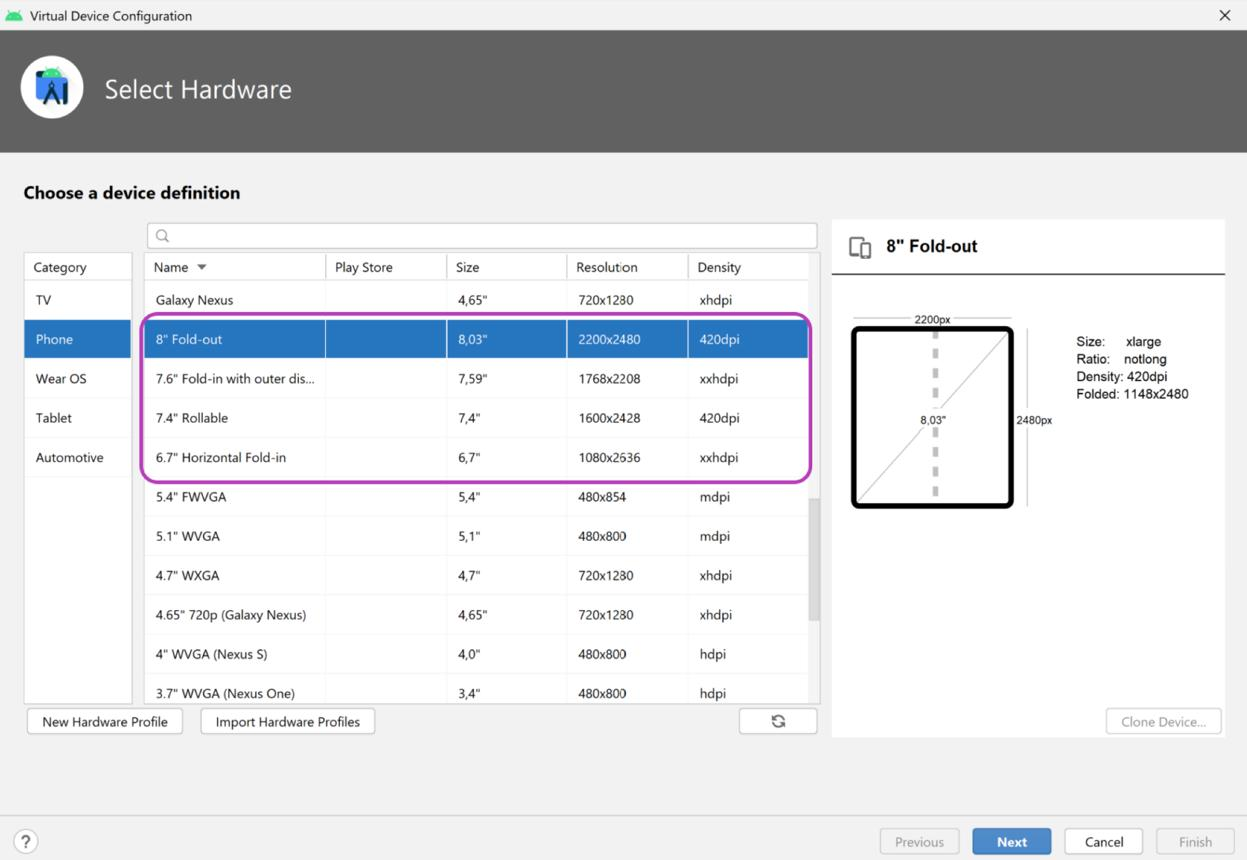

Add device fragmentation on top of all that.Android runs on thousands of distinct hardware configurations, we're talking different screen densities, GPU power, memory limits, weird manufacturer-level UI overlays (like Samsung One UI, MIUI, or OxygenOS). And Custom system behaviors that will interact with your app in ways no emulator or small device lab could ever possibly capture.

"It works on my device." That isn't confidence. It's just a sample size of one pulled from a wild population of thousands.

The Four Axes of Mobile Reliability Chaos

Reliability in mobile is not one problem. It is four simultaneous problems that interact.

1. Device Fragmentation

Android runs on about 3 billion active devices. That's a staggering number spread across countless manufacturers, screen sizes, RAM configurations, and chipsets. And while iOS is more controlled, it's not immune either, rendering, memory management, and even system-level API behavior can differ meaningfully between an iPhone 12 and an iPhone 16.

So what breaks because of all this device fragmentation?

You get weird rendering bugs on specific screen densities (like xxhdpi vs xxxhdpi). Code that runs perfectly fine on a phone with 8GB of RAM causes memory crashes on devices with only 2–3GB. There are also maddening differences in camera and media APIs across manufacturer implementations. Even permission dialogs act differently depending on whether you're using MIUI, One UI, or stock Android. The worst culprit might be background process killing, some Android skins (looking at you, Xiaomi and Huawei) are so aggressive that they completely break background sync and notification delivery.

It's wishful thinking. Teams that test on three devices and declare it "covered" aren't doing reliability engineering.

So what should you do? Define a device coverage matrix based on who your actual users are. You can use services like Firebase Test Lab and AWS Device Farm to run automated tests across a massive fleet of real devices. Your matrix doesn't need to cover every device under the sun, it just needs to cover 80% of your installs and every major device class (low-RAM, mid-range, and flagship) that exhibits different behavior.

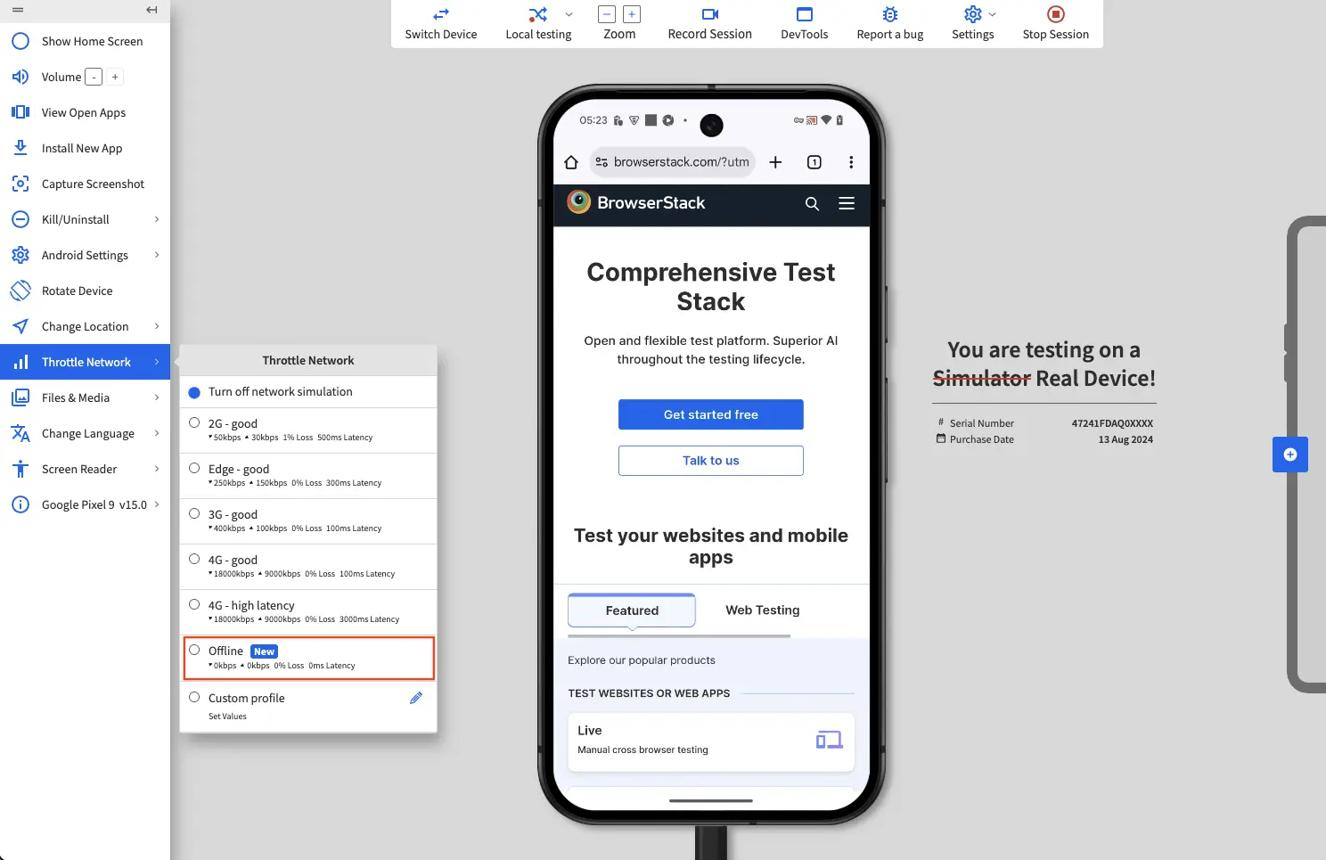

2. Network Variability

Your staging environment has a stable connection. Your users do not.

Real network conditions your users are on:

| Condition | Characteristics | Common Scenarios |

|---|---|---|

| 4G/LTE stable 15–50 | Mbps, low latency | Urban, commuting |

| 3G / weak 4G | 1–5 Mbps, variable latency | Tier 2/3 cities, elevators |

| 2G / EDGE | 100–200 Kbps, high latency | Rural areas, certain areas mid-day |

| Wi-Fi with packet loss | Variable, intermittent | Offices, cafes |

| Network switching | Latency spike during handoff | Commuting, moving between zones |

| Offline | No connectivity | Metros, basements, tunnels |

Most apps are built for "4G stable." The code just assumes requests will complete within a reasonable timeout, that retries are rare, and that the user's network won't just vanish mid-transaction.

None of those assumptions hold up for a huge slice of your real traffic.

So, what to do? You have to simulate awful network conditions in your test pipeline with tools like Android's Network Throttling or Charles Proxy, letting you explicitly test timeout behavior, retry logic, and how the app handles an offline state. An app that degrades gracefully on a bad network is always more reliable than one that works perfectly only on a good one.

We cover offline mode testing separately in this series, but the short version is this: most apps treat being offline as an error state when it should be a first-class design state.

3. Backend Dependencies

Your mobile app isn't an island. It calls APIs. And those APIs lean on a whole mess of other things: databases, third-party services, auth providers, payment gateways, and CDNs. Every single one is an external point of failure.

Here's the reliability problem most mobile teams just ignore: your app's perceived reliability has a ceiling, it's defined by the flakiness of your worst dependency, not the quality of your own code.

Think about it. Maybe your user auth service is at 99.5% uptime, your payment gateway is at 99.8%, the content API hits 99.9%, and your analytics are at 99.7%. When you chain them all together, what do you get?

**0.995 × 0.998 × 0.999 × 0.997 = ~98.9% uptime**

That's bad. It works out to about 52 hours of degraded experience for your users every year, all because of a bunch of dependencies that, on their own, seem totally fine.

And that's why debugging mobile apps is so much harder than it looks, the failure might not even be in your code.

So what can you do? Start by mapping your app's entire dependency graph and actually assigning reliability targets to every single service you rely on. You have to implement proper timeout handling, build fallback states, and use retries with exponential backoff. Make the failures visible. Surface them as real, observable signals instead of letting them die as silent errors.

4. Environment and State Unpredictability

Your test suite is pristine. It runs the app from a clean install, always in a known state. But your users' apps are running after a week of background sessions with 200 items cached, right in the middle of a low-memory alert and with a phone call interrupting the whole session.

Testing rarely covers the truly chaotic, real-world app states:

- Memory pressure - the OS killing background processes, or the app receiving a low-memory warning during a heavy operation

- App lifecycle interruptions - incoming calls, notifications pulled down mid-checkout, switching to another app and back

- Session state corruption - stale tokens, partially committed local database writes, mid-sync interruptions

- Slow startup - apps launched cold after a day of no use, fetching auth state under slow connectivity

These aren't edge cases. Not even close. For a user base numbering in the millions, every single one of these scenarios is happening thousands of times per day.

SLAs for Mobile: Defining What "Reliable" Actually Means

Here is a problem most mobile teams never solve: they cannot tell you what their reliability target is.

Ask an engineering team "what is your app's reliability target?" and you will get answers like:

- "We aim for as few bugs as possible"

- "We want 99% crash-free users"

- "We don't want P0s in production"

These are not reliability targets. They are vibes.

A Service Level Agreement (SLA) for a mobile app is a specific, measurable commitment about how the app will perform. It answers: what does "working" mean, and how often must it be true?

The Google SRE book defines the components clearly:

- SLI (Service Level Indicator): The metric you are measuring. Example: crash-free user rate, p95 API response time, successful checkout completion rate.

- SLO (Service Level Objective): The target for that metric. Example: crash-free user rate ≥ 99.5%, p95 checkout API latency ≤ 800ms.

- SLA (Service Level Agreement): A formal commitment, often external. For internal engineering teams, the SLO functions as the de facto SLA.

For mobile apps, useful SLIs to define SLOs around:

| SLI | What It Measures | Typical Target |

|---|---|---|

| Crash-free user rate | % of users with no crash in a session | ≥ 99.5% |

| API success rate | % of API calls returning non-error response | ≥ 99.9% |

| p95 screen load time | 95th percentile time to interactive for key screens | ≤ 2 seconds |

| Checkout completion rate | % of initiated checkouts that succeed | Depends on vertical |

Without defined SLOs, you cannot have a meaningful reliability conversation. You cannot answer "was this release reliable?" and you cannot answer "should we ship this?" with any rigor.

Continuous testing in CI/CD only creates real release gates when the gates are tied to specific thresholds - which requires having defined what the thresholds should be.

Error Budgets: The Tool That Forces Honest Tradeoffs

Once you have SLOs, error budgets are the logical next step. This is the concept that makes reliability engineering concrete, something an actual engineering team can grip and use every single day.