TL;DR: Most mobile teams get offline wrong. They treat it as an error state to be handled, not a user experience to be designed, and you can see the results in data loss, silent sync failures, confusing frozen UIs, and duplicate records that wreck user information. It's the wrong mental model. This article gets into the four real problems of offline mode (numbers sync, caching, conflict resolution, and UX states), showing how to test each one properly and revealing the specific scenarios your test suite is almost certainly missing.

Every mobile team has an offline story. It's a classic. A user files a support ticket saying their data just disappeared.After some digging, the engineers piece it all together: the person was on a metro, went offline mid-session, the app hit a dead-end error state with no recovery path. And Then, once back online, their local changes were silently overwritten by whatever the server had.

No error message. No retry prompt. Not even a hint that anything went sideways. Just lost work.

So, the team logs a bug. They add a retry call on that one API, close the ticket, and ship it. Problem solved, right? Nope. Two weeks later, a different customer reports their data is duplicating because the new retry logic is firing twice on reconnect, nobody thought to test what happens when someone comes back online after a long session.

This isn't bad engineering. It's the predictable outcome of a broken mindset: treating 'offline' as an afterthought, a rare exception to handle, rather than a fundamental user state to design for from day one.

The flawed premise is always the same. The team built the app assuming connectivity is the default and being offline is the exception. That assumption isn't just a little bit wrong, it's completely wrong for a huge fraction of your users and a catastrophic failure for the very people who use your app the most.

Testing under real-world conditions reveals network variability isn't an edge case. But a flaky connection and true offline are completely different problems, one just degrades performance, while the other shatters the entire set of assumptions your app is built on. The thing is, most apps just aren't built for that.

This article is about fixing that. We're not just talking theory, either; this is about how you can actually, tangibly test your application to make sure it holds up when the connection finally gives out.

Offline Is Not an Edge Case. Stop Testing It Like One.

Let's get right to it. What percentage of your users are regularly dealing with a bad connection, or no connection at all?

If you are building for India, the answer is not small. According to TRAI's 2023 Telecom Subscription Data, roughly 38% of India's wireless subscribers are still on 2G or early 3G networks. Think about the Delhi Metro. It carries over 6 million passengers daily, shuttling them through tunnels and underground stations where reliable connectivity is a total longshot. And it's not just a big-city problem, Tier 2 and Tier 3 cities see frequent network blackouts whenever congestion peaks. Even urban users with supposedly stable 4G connections aren't safe, constantly going offline in the most common dead zones: basements, parking garages, elevators, or during simple network handoffs.

If you are building globally, GSMA Intelligence data states that roughly 400 million mobile users worldwide are still on 2G networks, a user base so large it's baffling that it gets ignored. These aren't fringe users. In many markets, they're the majority.

Forget about spotty connections for a second. Your own users deliberately go offline. Think about it: airplane mode, a phone hitting low power mode and killing data, VPN failures mid-session, or corporate firewalls that arbitrarily block specific API endpoints without any warning. And that's not even counting the people who just haven't noticed their information plan is exhausted. These aren't pathological scenarios. they're daily reality.

But we all know the story. Offline testing is almost universally the last item thrown onto the test plan, and the absolute first one cut the moment a sprint timeline compresses.

The result is a nasty category of failures that are incredibly expensive to fix after the fact. Think corrupted local data. Unresolved sync conflicts. You get user-facing error screens that provide no path forward, and sometimes even silent information loss that people only discover days later when they notice something important is just missing.

Offline is not a failure mode. It is a usage state. The app needs to handle it with the same design intent as any other state.

The Four Problems of Offline Mode (and Why Each One Needs Its Own Test Strategy)

Offline isn't one problem. It's four distinct issues that just happen to show up together. Any team that tests for "offline" by flipping to airplane mode and tapping around? They've tested for exactly one of them.

1. Data Sync: What Happens to Changes Made While Disconnected

The most common offline failure? It's the one nobody tells you about: your changes just vanish.

Here's why. Most apps treat the server as the absolute source of truth, syncing data by sending it up and waiting for a definitive response. But when you're offline, there's no response. So the app either blocks the action entirely, you've seen the "must be online" message, or, worse, it accepts the change locally and then quietly throws it out on the next sync without any attempt at conflict resolution.

Neither of these outcomes is remotely acceptable for a production app handling people's data.

What actually needs to happen:

- Don't discard local writes when the user goes offline. Queue them.

- The queue has to persist across app restarts. No excuses. If a user goes offline, writes a note, closes the app, and only comes back online 12 hours later, that note still needs to sync.

- Just a single retry on reconnect? That won't cut it. Your queue needs proper retry logic with exponential backoff, otherwise, you're just hammering a downstream service that's already struggling to recover.

- Don't silently drop failed syncs, show them to the user.

What to test specifically:

| Scenario | What to Verify |

|---|---|

| Write data while offline, restore connection | Local changes sync correctly to server |

| Write data while offline, force-quit app, restore connection | Queue persists, sync completes on next session |

| Write data while offline, keep offline for 24+ hours, reconnect | Queue survives extended offline duration |

| Write data while offline, server rejects on sync | Error surfaces to user with actionable path |

| Write data while offline, same data modified on server | Conflict resolution fires correctly (see section 3) |

| Make 50+ writes while offline | Queue handles bulk operations without data loss or ordering errors |

Most teams test the first scenario. Almost none test the fourth, fifth, or sixth.

The tools for this: Android's WorkManager is the standard for durable background sync on Android - it guarantees execution even after app restarts and device reboots. On iOS, BGTaskScheduler handles deferred background work. Testing these properly means verifying behaviour after app kill, not just after backgrounding.

2. Caching: What the User Sees When There Is No Network

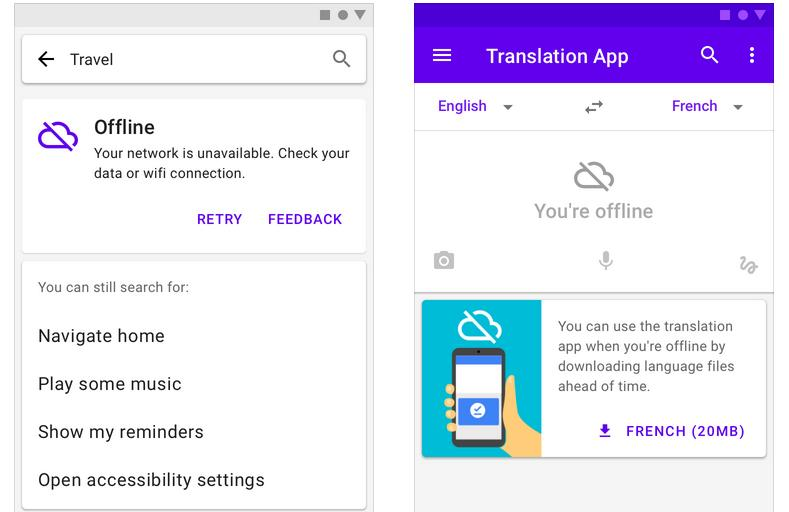

Then there's the second problem: what users actually see when they open the app with no connection or get knocked offline in the middle of doing something. It's a mess. Most apps do one of three things, and honestly, two of them are just terrible.

Wrong: A blank screen with a generic error. Total dead end. This is what most apps do, they fire an API request, get nothing back, throw up an error message, and just give up completely. The user is left staring at a useless screen, with no idea what data they had and no ability to do anything, so they just close the app. It's basically a crash with extra steps.

Wrong: Silently showing stale data. This is the other failure mode, and it's almost worse. The app will happily serve up cached information from three days ago, letting the user make decisions on information that's just flat-out wrong, placing an order, sending a message, booking a slot. Is there a timestamp? A "last updated" indicator? Any kind of banner? Nope. The user has absolutely no idea they're working with old info.

Correct: Show the cached data, but be honest about it. It's that simple. Clearly mark the information as cached, reveal exactly when it was last synced, and offer a simple way to try refreshing. The user sees their old information. They understand it might be stale, and they know how to get an update when their connection is back.

This isn't some complex UX pattern. It's not rocket science. It's just a design decision that most teams never bother to make because "offline support" was never a line item on the project plan.

Here are some caching strategies worth understanding, and testing:

| Strategy | How It Works Good For | Good For | Fails At |

|---|---|---|---|

| Cache-first | Serve cache, refresh in background | Read-heavy, tolerant of slight staleness | High-frequency real-time data |

| Network-first with cache fallback | Try network, fall back to cache on failure | Data that must be current when online | Poor networks (slow, not offline) |

| Stale-while-revalidate | Serve cache immediately, revalidate async | Fast perceived performance, most content Time-critical accuracy | |

| Cache-only (offline-first) | Never hit network, sync separately | True offline-first apps | Data that must be real-time |

Google's Workbox library patterns are documented extensively for the web. But the mental models? They map directly to mobile caching decisions you'd make using Room on Android or Core Data on iOS.

What to test specifically:

- Open app in offline mode from scratch (cold start, no cached data). What does the user see?

- Open app online, load data, go offline, navigate to a new screen. What is shown?

- Open app online, load data, go offline, kill app, reopen offline. Does cache persist across app restart?

- Open app online, go offline, wait 48 hours, reopen. Is the cache still there? Is it correctly marked as stale?

- Open app in offline mode with cached data. Is there a visible timestamp or freshness indicator?

Almost no one runs the cold start offline test. It's also the most jarring user experience imaginable, a completely blank app with a network error on first open, and it happens to literally every person who installs your app and immediately loses their connection.

3. Conflict Resolution: What Happens When Both Sides Changed the Same Data

This is the single hardest problem in offline mode. It's the one most apps solve by just pretending it can't happen.

So what's a conflict? It happens when the same piece of data gets changed in two different places before a sync can happen. Imagine User A edits a record while they're offline, but at the same time, another user (or another session) updates that exact same record back on the server. When User A finally reconnects, the app is staring at two distinct versions of the truth. Which one wins?

Most apps take the easy way out: "last write wins." This approach is simple, as whichever version syncs last completely overwrites the other one. It's the easiest path for developers but also incredibly dangerous for user data, because it just silently throws away one person's changes without anyone ever knowing.

There are three real ways to handle conflict resolution:

Last write wins (LWW): The server just looks at the timestamp (or the sync time) and keeps whichever version is newest. Done. While it's fast to implement, this method loses data without a trace. It's only really acceptable for trivial things where the most recent change is always the right one, think UI preferences or theme choices.

Client wins: Bad idea. The local version always overwrites whatever is on the server during a sync. At any kind of scale, this creates a total mess of duplicate or divergent data and is never the right choice for multi-user or even multi-device situations.

Merge with conflict surfacing: Here, the app is smart enough to see that both versions branched off from a common ancestor, so it attempts to automatically merge any changes that don't clash. The app then surfaces any genuine conflicts (the hard ones) to the user for a manual decision. It's tough to build. But it's the only correct approach for user-created content, documents, or any field where both changes could be independently valid.

Applications like Notion and Linear have published engineering posts about their conflict resolution systems - Notion's collaborative editing model uses operational transforms to merge concurrent edits, and Linear's sync engine uses a variant of CRDTs (Conflict-free Replicated Data Types) to guarantee eventual consistency without data loss.These systems get seriously complex. They're built for collaboration on a massive scale, and while your app probably doesn't need that level of sophistication, it absolutely needs something better than a silent "last-write-wins" approach for any user-generated content.

What to test specifically:

| Scenari/o | What to Verify |

|---|---|

| Write data while offline, restore connection | Local changes sync correctly to server |

| Write data while offline, force-quit app, restore connection | Queue persists, sync completes on next session |

| Write data while offline, server rejects on sync | Error surfaces to user with actionable path |

| Write data while offline, same data modified on server | Conflict resolution fires correctly (see section 3) |

| Make 50+ writes while offline | Queue handles bulk operations without data loss or ordering errors |

You have undetected conflict scenarios in production. Right now. If your app handles any data that users create or modify, and you've never run these tests, then it's a certainty.

4. UX During Offline States: What the User Experiences in Real Time

And the fourth problem? People tend to wave it off as a design issue, not something that testing should even worry about. It's both.

A bad offline UX isn't just frustrating; it directly causes user errors. It's a mess. When users get zero feedback about their offline state, they take actions they assume will succeed, submitting forms, placing orders, sending messages, and they get no sign that those actions are queued or will just fail. Later, once they're back online, one of two things happens: either nothing (because the app simply discarded the action) or everything happened twice after the user manually retried just before the queue fired.

This means there are specific offline UX states you have to design and test for:

Transition to offline (real-time detection): What happens when the connection drops mid-session? The app has to spot it. Fast. And it needs to communicate the problem clearly: maybe a persistent banner, a changed status indicator, or just something visible that doesn't interrupt what the user is doing.. Android's ConnectivityManager and iOS's Network framework provide real-time reachability monitoring. Almost every app tries to handle this. The problem is they usually just flash a generic "no internet" toast that disappears after three seconds, never to be seen again.

What about offline action feedback? When a user does something, submits a form, saves a note, posts a comment, while they're offline, the app has to communicate that the action was queued and will sync up later. "Saved offline. Will sync when connected." is the right pattern. A spinner that just spins forever isn't.

Reconnection is tricky. When connectivity returns, does the app just silently sync in the background? Does it show a progress indicator for large sync operations? Does it actually confirm to the user that their queued actions went through? That moment of reconnection is incredibly high-risk for confusing people, especially if a sync takes more than ten seconds or a conflict pops up.

Partial connectivity is the absolute worst state. The user has a network connection, but it's so degraded that requests just time out, leaving them in a frustrating limbo.

The app isn't truly offline, but it behaves as if it's. Most detection logic just checks for connectivity, not actual server reachability, meaning the app thinks it's online (it has a signal!) while requests are silently failing in the background.

This creates the worst possible UX: the user believes the app should be working, and it's not. Testing for this requires simulating high-packet-loss networks, not just toggling the wifi off.

The user should never have to wonder whether their action happened. The app's job is to be unambiguously clear about the state of every action taken.

How to Actually Test Offline Mode: The Tools and Approach

Most people just test for offline by flipping on airplane mode and tapping around. It's better than nothing. But that isn't a real test strategy, it's barely a starting point for understanding how your app behaves when the connection inevitably drops.

So, here's what a real offline test approach actually looks like.

Simulating Offline and Degraded Conditions

Android:

- Network throttling in Android Emulator: You can use the emulator's built-in network profiles (2G, 3G, 4G) or dial in custom latency and bandwidth settings yourself. This is the whole point. It's not just for testing a full offline state, but for simulating the kind of frustratingly slow and degraded networks people actually experience every day.

adb shell svc wifi disableandadb shell svc data disable: Programmatically disables Wi-Fi and mobile data without touching airplane mode. Useful for automation scripts.- Android Network Emulator (ANE): This kills your Wi-Fi and mobile data programmatically. No messing with airplane mode.

It's really just for automation scripts, perfect for when you need to control the connection state directly but can't deal with the other system changes that airplane mode triggers.

iOS:

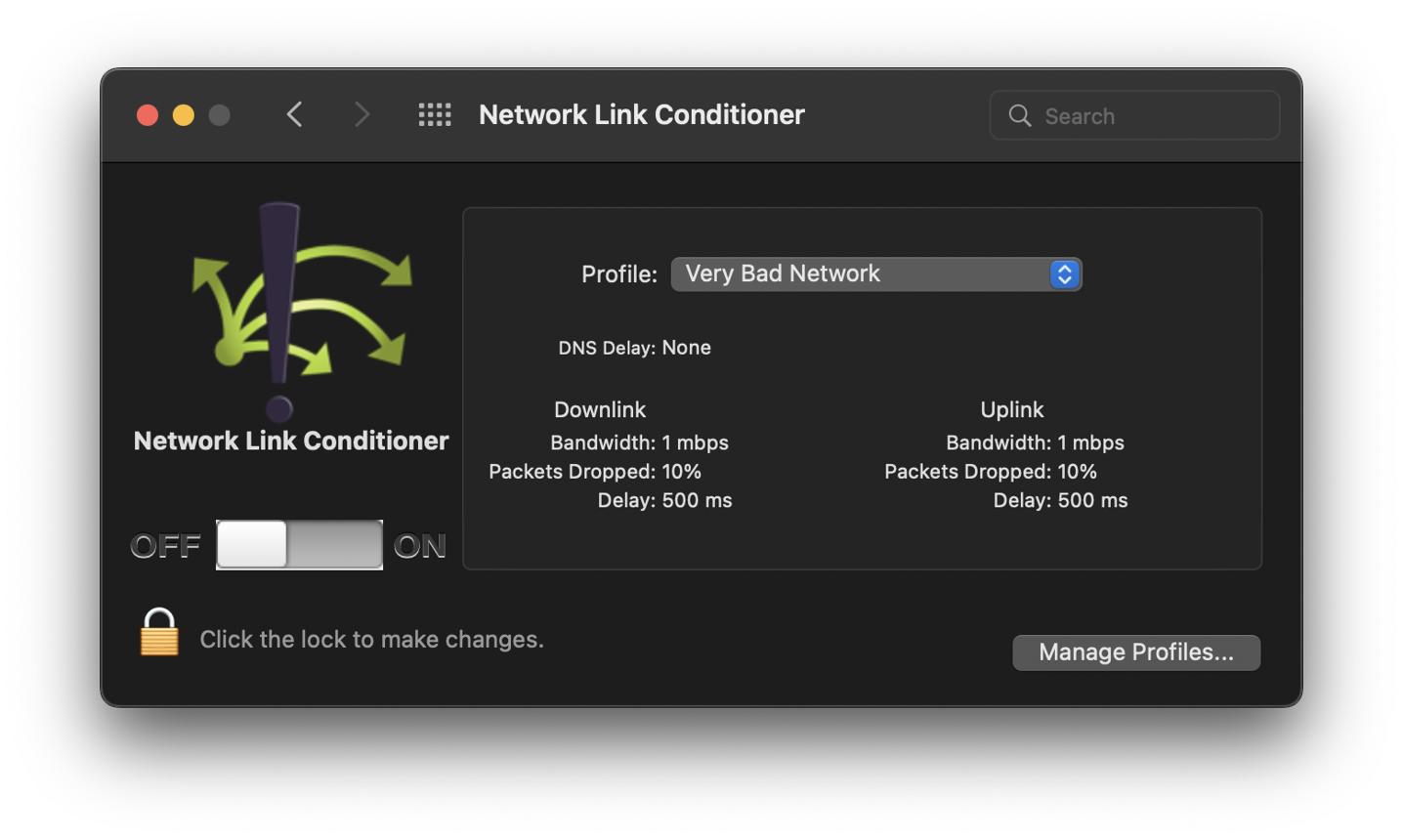

- Network Link Conditioner: This is a fantastic developer tool. It gives you direct, system-level control over network conditions across the entire device: you can throttle bandwidth, inject latency, and simulate packet loss with a download from Xcode's Additional Tools.

- XCTest +

XCUIDevice:This is a fantastic developer tool. It gives you direct, system-level control over network conditions across the entire device: you can throttle bandwidth, inject latency, and simulate packet loss with a download from Xcode's Additional Tools.

Cross-platform / Proxy-based: